Talha Zaidi

I am an AI researcher and Ph.D. Candidate in Computer Science at Kansas State University, advised by Prof. Arslan Munir in the ISCAAS Lab. I am expected to graduate in August 2026. Earlier, I completed my M.S. in Biomedical Engineering at Istanbul Medipol University, Turkey, and my B.S. in Mechatronics and Control Engineering at UET Lahore.

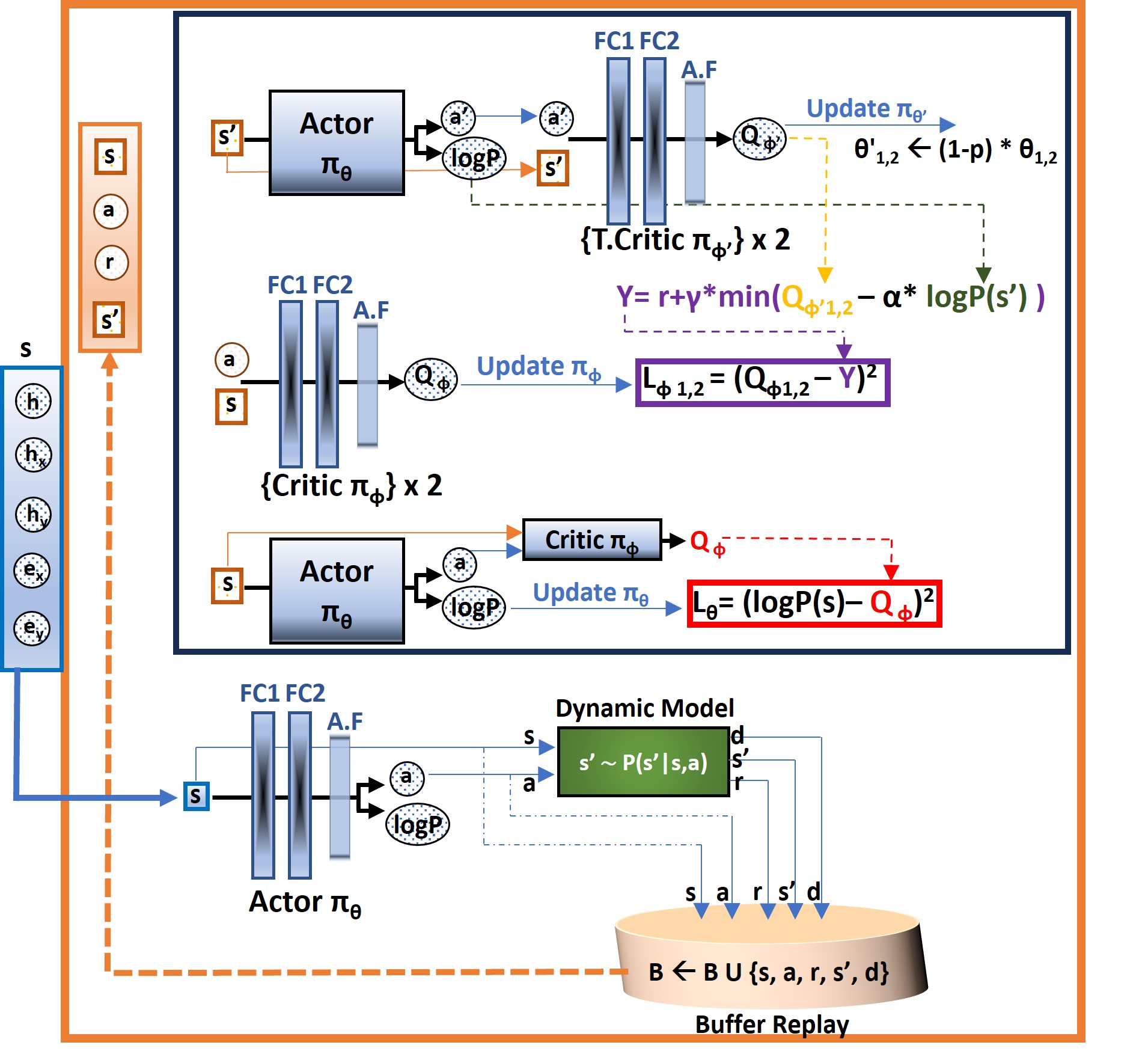

Research Interests My research develops reinforcement learning, generative modeling, and foundation-model adaptation methods for reliable decision-making in complex real-world systems. I focus on sequential decision modeling, policy refinement, and latent representation learning for offline/online RL, long-horizon planning, and reasoning under uncertainty, with applications in embodied AI, robotics, autonomous systems, and cyber-physical intelligence.

News

Recent Updates

Selected updates- •June 2026: Selected to attend the 2026 IEEE Summer School on Telerobotics and Cyborg Technologies at Rochester Institute of Technology (RIT).

- •May 2026: Our recent RL robotics paper GRALP is accepted at 35th International Joint Conference on Artificial Intelligence (IJCAI) 2026.

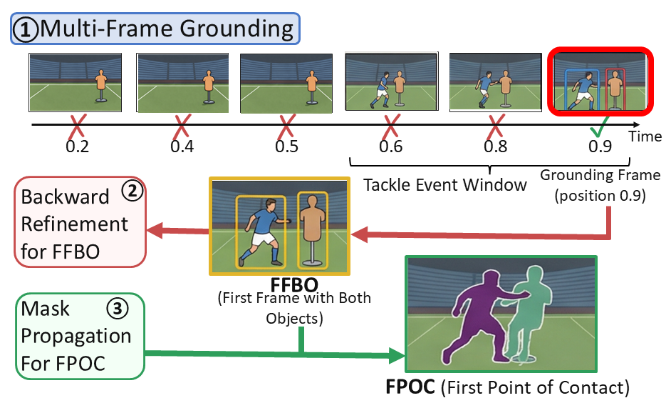

- •April 2026: is accepted at CVsports @ CVPR 2026 on grounded and motion-aware video understanding.

- •Mar 2026: Selected as Graduate Student of the Month at Kansas State University for research and scholarly contributions.

- •Mar 2026: Received a Best Presentation Award at APEC 2026 for work on resilient coordination and security of grid-forming inverter networks.

- •Feb 2026: CRISP is submitted to the IROS 2026 Main Track on long-horizon offline planning and context-robust skill inference.

- •2025–2026: The research portfolio is expanding towards robotics, vision-language-action models, and cyber-physical systems. .

Multidisciplinary Research

The center remains fixed on Generative AI + Reinforcement Learning, while the surrounding domains show where these core methods are applied and extended. The goal is not to present unrelated topics, but to show a connected research program spanning robotics, cyber-physical systems, LLM and VLM agents, health AI, autonomous systems, and resilient infrastructure through shared ideas in planning, representation learning, robustness, and decision-making.

AI Trajectory Optimization

Resilient Infrastructure

Neuroprosthetics Control

Research & Publications

Latent-skill offline RL framework for contact-rich robotic manipulation. Improves long-horizon planning while preserving behavior support and low-level execution quality across D4RL, Adroit, and RoboSuite benchmarks.

Masked latent-skill inference for robust long-horizon planning under missing or degraded context. Focuses on partial observability in offline reinforcement learning and context-robust skill sequencing.

Developed the SA3C algorithm with an attention mechanism to improve sample efficiency and decision quality for low-thrust spacecraft trajectory optimization in geocentric and cislunar missions.

Developed a novel Cascaded Deep Reinforcement Learning (CDRL) approach to optimize low-thrust spacecraft trajectory planning, significantly improving time-efficient orbit transfers in complex multi-body environments for transfers to GEO and NRHO.

Introduced a resilient neural coordination framework for grid-forming inverter networks that maintains stability and coordination under cyberattacks in smart-grid environments.

Developed a machine-learning-assisted method for optimizing low-thrust orbit-raising trajectories, integrating a sequential algorithm with a neural network-based high-level planner and benchmarking it against deep reinforcement learning approaches for geostationary and halo-orbit missions.

Developed a cascaded DRL model for optimizing long-duration, low-thrust spacecraft transfers from GTO to GEO. Guided by a gradient-aided reward function, the method significantly reduces transfer time and improves spacecraft autonomy in complex multi-revolution transfers.

Adaptive multi-teacher knowledge distillation framework aimed at improving adversarial robustness beyond standard single-teacher or conventional training approaches.

Proposed a simple and efficient domain generalization approach that augments source domains by exploring dominant modes of variation in the feature space, improving generalization to unseen domains across standard DG benchmarks.

Developed and compared machine learning models for laboratory earthquake prediction using LANL data, where CNN-LSTM models improved time-to-failure prediction over hand-crafted approaches.

Demonstrated trajectory-based neuroprosthetic control in rodents using primary motor cortex activity, providing a cost-effective platform for studying brain-machine interfaces and neural control.

Experience

- Developed GRALP — a latent-skill offline RL framework for contact-rich robotic manipulation; ~8% higher avg. performance on D4RL, Adroit, RoboSuite. Related work includes submissions to IJCAI 2026 and IROS 2026, alongside accepted work at CVsports @ CVPR 2026.

- Led NASA-funded SA3C project: attention-based RL agent for low-thrust spacecraft trajectory optimization, reducing transfer time by 10% over strong baselines. Published in IEEE Transactions on Aerospace and Electronic Systems (2025).

- Engineered AI-driven security controller for smart grids (DOE-funded): high attack-detection rates, low false positives on inverter-level anomalies, faster post-disturbance recovery in MATLAB/Simulink + PyTorch simulations.

- Authoring research proposals to secure federal and industry funding for robust RL and autonomous systems research.

- Engineered a cortically-driven robotic arm control system using primary motor cortex signals from freely moving rats. First trajectory-based neuroprosthetic control in rodents, >78% accuracy. Published in Journal of Neuroscience Methods.

- Built CNN-LSTM models for aperiodic earthquake time-prediction using LANL data, significantly outperforming traditional signal-processing baselines. Published at IEEE SIU 2020.

- Technical support and troubleshooting for complex automated industrial systems, building deep familiarity with real-world control and automation constraints.